After years of AI hype, the one thing it will never do is magically erase the physical limits of America’s power grid and chip supply chain—no matter how loudly the consultants sell it.

At a Glance

- Analysts say 2026 begins an AI “digestion phase,” where infrastructure—not imagination—sets the pace.

- Power generation, grid interconnections, and transformer shortages are emerging as binding constraints for data centers.

- High-bandwidth memory and advanced chip packaging capacity remain tight, limiting how fast new systems can scale.

- Many enterprise AI pilots still fail to reach production due to data quality, legacy integration, and talent scarcity.

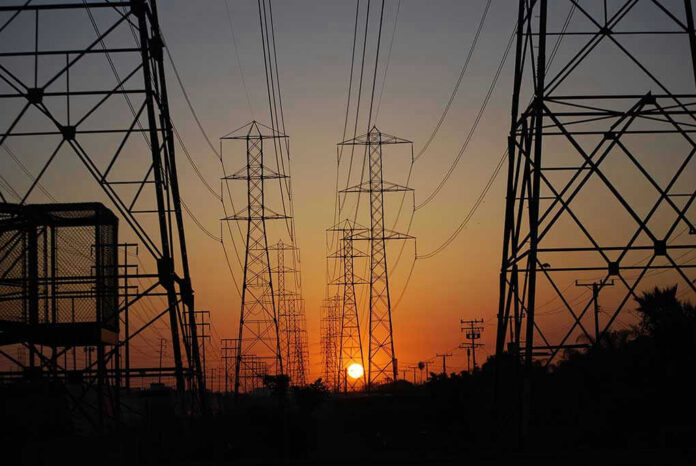

AI Hits a Hard Wall: Energy and Grid Capacity

Utilities and grid operators have become the quiet gatekeepers of AI’s future because large models require huge, steady electricity loads and data centers can’t scale on speeches alone. Research pointing to 2026-2028 as a “crisis window” emphasizes that interconnection queues, transmission buildouts, and transformer availability are slow, real-world bottlenecks. Software improvements can reduce compute per task, but they cannot conjure new generation or rebuild grid infrastructure overnight.

That constraint matters for consumers, too, because energy scarcity tends to show up as higher prices and reliability concerns. When policymakers promise “AI leadership” without matching it to permitting reform and energy expansion, the public gets the bill while the hype continues. The research also notes that relief from major new baseload options, including nuclear, is more plausible in the 2030s—too late to prevent a near-term squeeze if demand keeps rising.

Chip Reality Check: HBM Memory and Advanced Packaging

Hardware supply chains are another place where AI can’t wish away scarcity. The research highlights how high-bandwidth memory (HBM) and advanced chip packaging have become chokepoints, with capacity described as effectively booked through 2026 for key suppliers. It also notes that Nvidia’s demand consumes a major share of advanced assembly, illustrating how concentrated these pipelines are. Even with capital available, factories and specialized tooling take time.

For businesses, this means the AI race is increasingly about allocation, prioritization, and cost control rather than endless, exponential scaling. When the same limited components are needed across hyperscalers and enterprise buyers, smaller players can get priced out or delayed. That reality undercuts the “every company will run frontier AI everywhere” talking point and pushes organizations toward narrower deployments, smaller models, and efficiency strategies while the supply chain catches up.

Why Many AI Pilots Still Don’t Make It to Production

Enterprise deployment friction may be less flashy than GPUs, but it can be just as decisive. The research cites persistent failures to scale AI pilots into production due to data quality problems, legacy system integration, and a shortage of specialized talent, including a small global pool of elite AI engineers. These issues don’t vanish with bigger models. If a company’s internal data is messy or siloed, AI can amplify errors faster than it fixes them.

Workforce impacts are also more complicated than the old “replace workers” narrative. Research on 2026 trends describes organizations wrestling with trade-offs between efficiency and meaning, alongside the need to build “change fitness” so workers can adapt. Another analysis argues companies should re-architect workflows around AI capabilities rather than treating AI like a traditional labor substitute. For leaders, the practical question is governance: who is accountable when an AI-driven process fails?

Deepfakes Get Cheaper While Trust Gets Harder

While infrastructure limits slow some forms of scaling, other capabilities spread fast—especially synthetic media. Research tracking 2026 concerns highlights that deepfakes are becoming routine and cheap, intensifying the challenge of verifying what’s real in politics, business, and everyday life. That creates pressure for stronger authentication norms, better provenance tools, and clearer organizational policies. The risk is social: when trust collapses, people disengage or default to tribal filters.

Put together, the research points to a central conclusion: AI will not “transcend” thermodynamics, supply chains, or human responsibility. The immediate story of 2026 is not a sci-fi singularity; it’s a practical test of utility under constraints—power, hardware, labor, and governance. In a country tired of expensive fantasies and top-down planning, the policy debate will come down to basics: build energy, modernize infrastructure, demand accountability, and stop pretending slogans can replace reality.

Sources:

Why AI is slowing down in 2026

AI trends for 2026: Building change fitness and balancing trade-offs

Stanford AI experts predict what will happen in 2026

International AI Safety Report 2026

11 things AI experts are watching in 2026

Artificial intelligence (AI) challenges